Jordan Richards and Dr. Abdullah Al Rashdi

A few months ago, a senior manager from Oman shared an observation that reflects a growing institutional tension.

He had spent three decades in a high-risk industrial environment defined by complex systems, unforgiving margins, and zero tolerance for error. When he retired, his successor arrived equipped with advanced dashboards, AI-powered analytics, and predictive models far more sophisticated than anything previously available.

Within weeks, however, the organisation confronted a familiar question.

Why are we still making mistakes we already learned how to avoid?

This question sits at the centre of what we call the disruption of intelligence. We possess more computational power, more data, and more automated insight than at any point in history. Yet across organisations, and increasingly at national scale, something essential is weakening: continuity of judgment.

Not just knowledge. Intent.

Artificial intelligence is not simply accelerating processes. It is reshaping how institutions remember, how decisions are justified, and how reasoning is preserved across generations. The issue is not whether AI works. The issue is whether institutional memory is engineered with the same seriousness as technological capability.

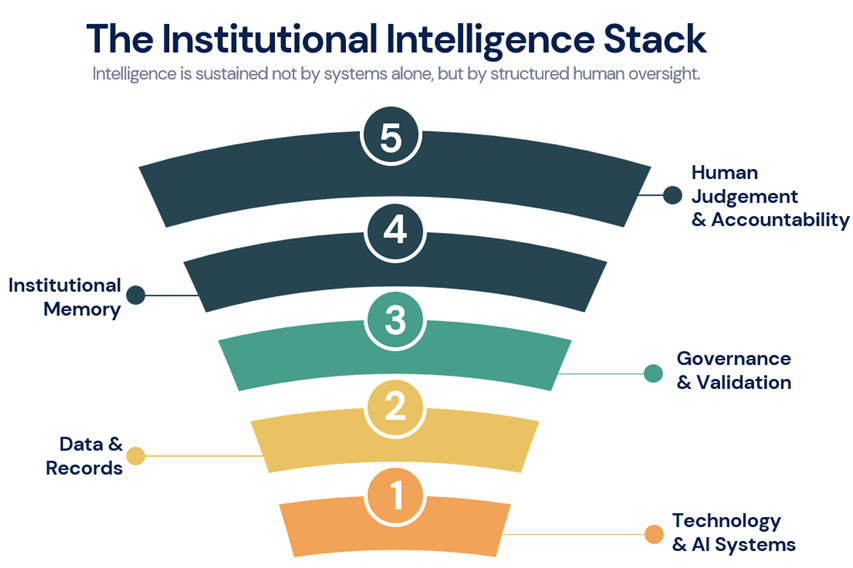

Intelligence Has Always Been More Than Processing Power

For most of human history, intelligence lived in people before it lived in systems. It accumulated through experience, repetition, mentorship, and failure. It moved through organisations in conversations, cautionary stories, and tacit professional instincts.

Ask any experienced engineer, regulator, or policy advisor how they learned their craft and you rarely hear about manuals. You hear about near misses. You hear about moments when a senior colleague intervened and said, “Let me explain why we do it this way.” You hear about procedures that were technically correct but dangerously incomplete.

What is transferred in those exchanges is not simply information. It is intent – the reasoning behind decisions, the risks consciously accepted, the alternatives deliberately rejected.

Research in organisational learning has long recognised that critical knowledge is often tacit, embedded in judgment and context rather than documentation. It is slow to develop and easy to lose.

AI, by contrast, is fast. It detects patterns at scale, optimises decisions in milliseconds, and produces outputs with statistical confidence. It does not retire or fatigue. That is its power.

It is also its limitation.

Intelligence is not only about answering questions. It is about knowing which questions matter, when to hesitate, and when experience should override probability. Pattern recognition does not capture the moral weight of a decision, the regulatory implications of deviation, or the subtle signals that precede failure.

Figure 1.1 The Institutional Intelligence Stack

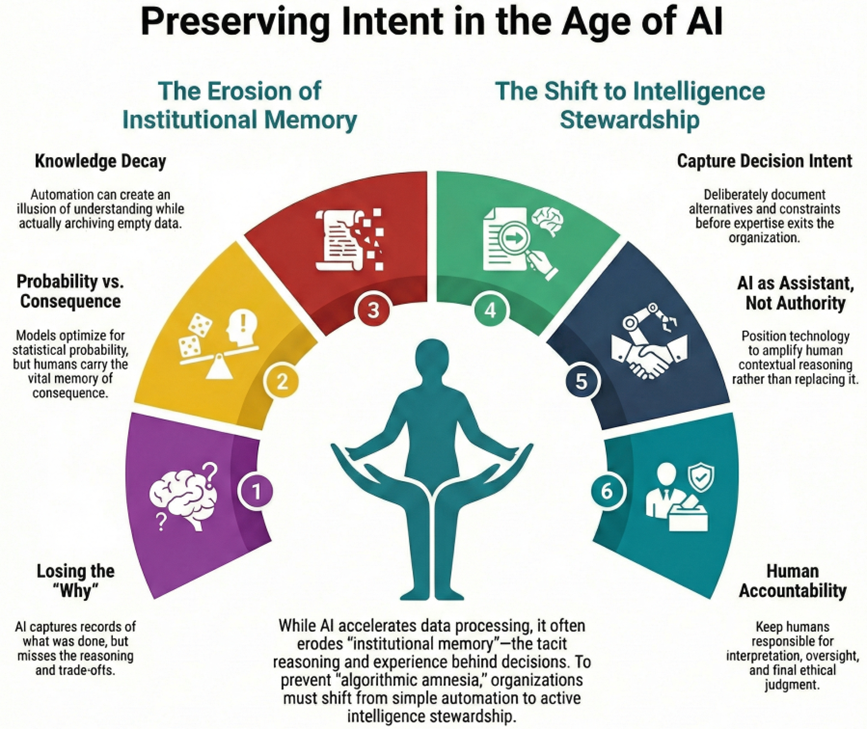

The Quiet Erosion of Institutional Memory

Across sectors such as energy, aviation, healthcare, and public administration, a familiar pattern is emerging.

Experienced professionals leave. Systems remain. AI tools are introduced to capture knowledge or support decisions. Yet resilience does not automatically increase.

Most systems capture records, not reasoning.

Documents show what was done. They rarely explain why it was done, what alternatives were debated, or what constraints shaped the final choice. Decisions become artefacts stripped of pressure and trade-offs.

When intent disappears, organisations experience institutional forgetting. Actions are preserved. Judgment erodes.

AI systems ingest these records as if they represent complete understanding. They do not. They reflect what was written down, not what was sensed, debated, or cautiously avoided.

As one senior executive observed:

“We automated intelligence without stabilising understanding.”

AI can accelerate knowledge decay by creating the illusion that understanding has been transferred when it has merely been archived.

Figure 1.2 Preserving Intent in the Age of AI

When Confidence Outpaces Comprehension

One underestimated risk of AI adoption is overconfidence.

Machine-generated recommendations arrive ranked, scored, and statistically justified. They appear precise. In complex environments, that precision is reassuring.

Yet automation bias remains real: people favour system outputs even when contradictory signals exist. The more sophisticated the model appears, the stronger that tendency becomes.

Organisations override hesitation because “the model says it is acceptable,” only to discover the model never encountered a rare but catastrophic edge case.

AI optimises for probability. Humans carry memory of consequence.

Probability and consequence are not identical.

The objective is not to romanticise human decision-making, but to integrate statistical precision with contextual vigilance. Resilient institutions treat AI as decision support, not authority.

The Human Cost of Algorithmic Amnesia

The disruption of intelligence is cultural as much as technical.

Junior professionals defer to systems rather than developing independent judgment. Senior experts feel reduced to validators of outputs rather than stewards of reasoning. Retirees depart with knowledge never fully externalised.

Over time, organisations stop asking experienced professionals why systems work as they do. They ask the system instead.

When automation absorbs routine tasks and leaves humans with rare anomalies, skills atrophy. Situational awareness declines. The capacity to intervene weakens.

This is not a failure of technology. It is a failure of governance and design.

In high-risk environments, that difference is not theoretical; it is operational.

Intelligence as a Social Contract

At national scale, the implications deepen.

Governments are accelerating AI deployment across policy modelling, service delivery, infrastructure planning, and security. The promise is efficiency and scale.

The risk is institutional amnesia.

Intelligence is not purely technical. It is social. It depends on mentorship, trust, ethical framing, and continuity between generations. AI can augment those elements, but it cannot replace the social architecture that sustains reasoning.

When intelligence becomes centralised in models rather than distributed across experienced practitioners, resilience weakens. When policy decisions rely exclusively on pattern recognition without historical context, nations risk repeating strategic errors with greater speed and confidence.

National digital strategies must move beyond infrastructure and data platforms. They must address how institutional memory is preserved, validated, and refreshed.

From Knowledge Capture to Intelligence Stewardship

The way forward is not resistance to AI. That would be impractical and counterproductive.

The challenge is positioning AI within a broader human intelligence architecture rather than above it.

Used well, AI surfaces patterns humans might overlook, strengthens predictive capacity, and supports decision-makers under pressure. Used poorly, it becomes a substitute for thinking.

Institutions navigating this transition successfully exhibit three consistent traits.

They treat AI as an assistant, not an authority.

They deliberately capture decision intent, not merely outcomes, before expertise exits the organisation.

They keep humans accountable for interpretation, oversight, and final judgment.

This reflects a principle of complementarity. Technology amplifies comparative human strengths such as contextual reasoning, ethical awareness, and adaptive thinking. It does not replace them.

In regulated and high-risk environments, this distinction is operationally critical. Accountability cannot be delegated to probability models.

The Choice Ahead

We are not facing a future in which machines replace human intelligence. We are facing a future in which intelligence is redefined, sometimes carelessly.

The true disruption is not that AI can think faster. It is that institutions may stop remembering why certain judgments mattered.

If we approach AI purely as a productivity multiplier, we risk constructing systems that look intelligent, sound intelligent, and fail in profoundly human ways.

If we approach it as a component within a broader institutional intelligence framework, we can build something more durable. A partnership where AI accelerates insight, human judgment remains accountable, and institutional memory is consciously stewarded rather than passively archived.

Global risk assessments increasingly highlight AI-driven decision-making without clear accountability as a systemic governance risk. The disruption of intelligence is not theoretical. It is already unfolding across sectors and nations.

The decisive question is whether institutions will manage this transition deliberately, embedding intent and accountability into digital systems, or allow reasoning itself to become the first casualty of technological acceleration.

The future advantage will not belong to the most automated organisation or the most digitised nation. It will belong to those that preserve judgment alongside innovation, and continuity alongside change.

Author’s Closing Perspective

This conversation lies at the crossroads of innovation, resilience, and institutional intelligence. As nations invest in artificial intelligence to boost growth, the real advantage will stem from how human judgment, intent, and experience are integrated with technology. Policymakers should shift focus from “technological disruption” to “institutional continuity.” Sustainable innovation thrives on continuity, not disruption. Countries that preserve human reasoning within digital systems, rather than replacing it, will be better equipped to adapt, manage risk, and innovate responsibly in an increasingly complex world.

About the Authors

Dr. Abdullah Al Rashdi is a senior digital transformation and enterprise architecture leader with over 30 years of experience across high-risk industrial and public sector environments. He specialises in national digital capability, governance modernisation, and large-scale technology strategy. Holding a Doctorate in Digital Transformation, he advises on aligning emerging technologies, including AI, with institutional resilience and long-term policy objectives.

LinkedIn Profile

Jordan Richards is a digital transformation and knowledge governance advisor with over 20 years of international experience across Oil & Gas, government, and regulated sectors. He works with senior leaders to align AI, enterprise architecture, and institutional knowledge with accountable governance, operational performance, and long-term resilience.

LinkedIn Profile